Unlocking Intuitive Design: Why UX Starts with Familiar Components

Date:

November 17, 2024

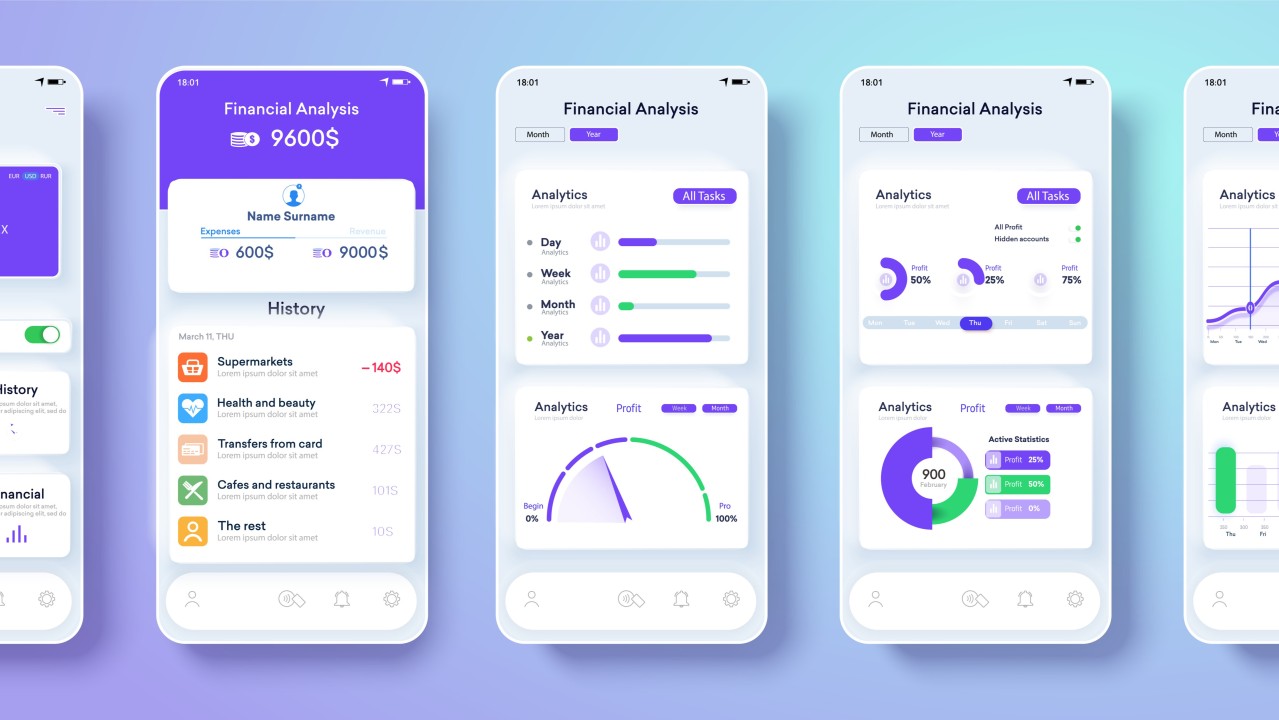

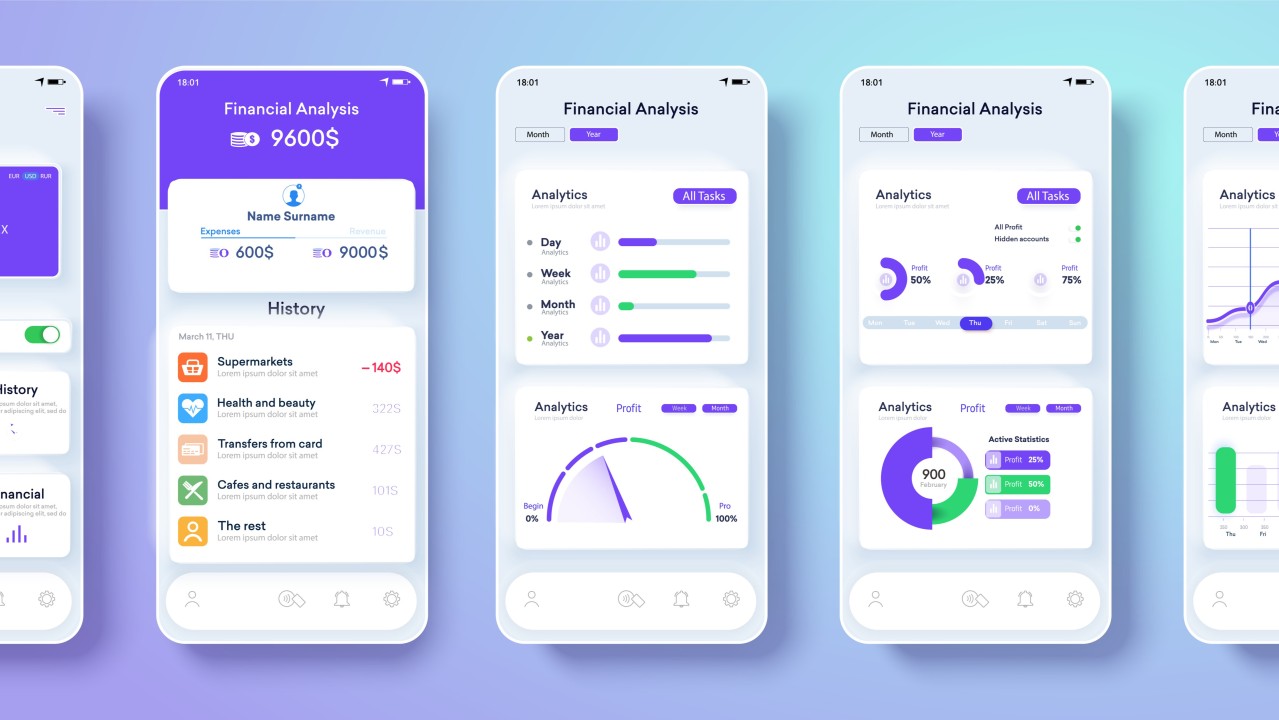

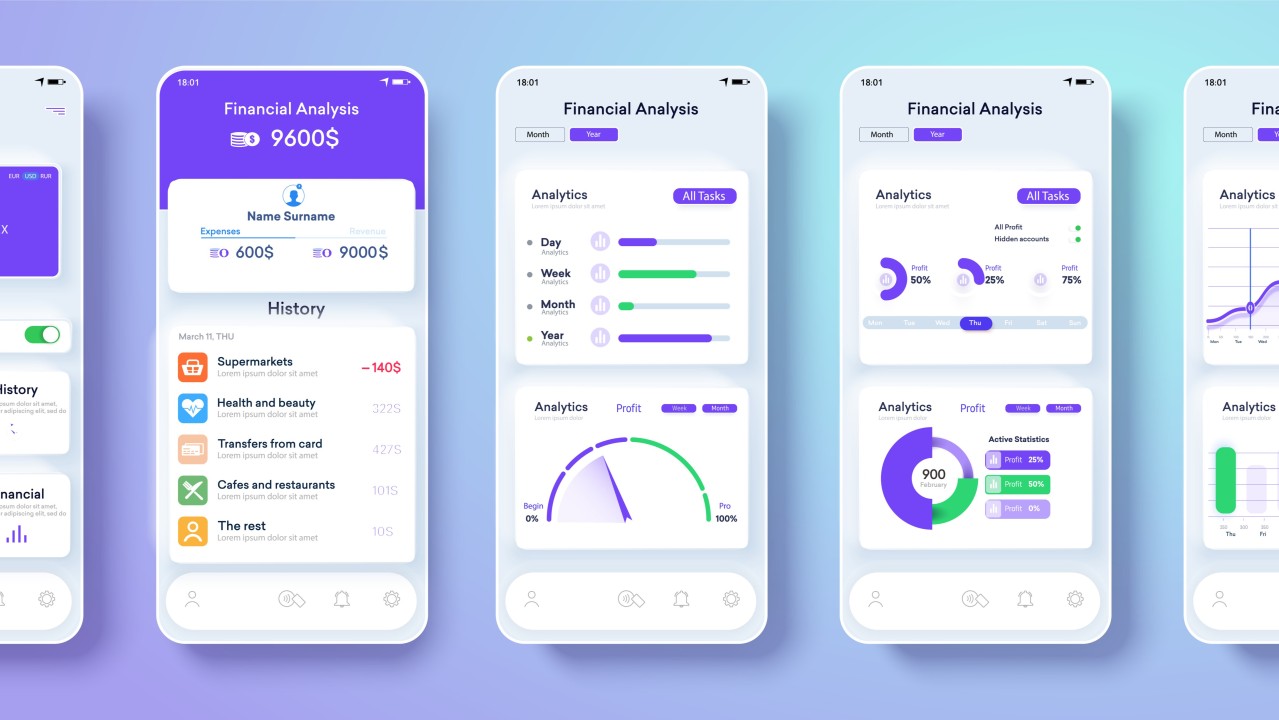

Imagine this: You’re rushing through your morning routine, hands full. You call out, “Hey Alex, what’s on my schedule today?” A soothing voice replies, outlining your meetings while your device displays your calendar. With a quick touch on the smartwatch, you reschedule an afternoon appointment. This seamless integration of voice commands, touch interactions, and visual feedback illustrates the power of multi-modal interfaces—the future of user experience design.

Core Features of Multi-Modal Interfaces

Adaptability: Users can switch between input methods based on context and preferences. For example, an operator might use voice commands to monitor machine performance on the production floor and later switch to a desktop interface to analyze detailed data and generate reports.

Intuitiveness: By mimicking natural communication styles, such as conversational voice commands, these systems make interactions feel effortless and familiar. This reduces the learning curve and fosters a more engaging experience.

Accessibility: Offering multiple input options ensures inclusivity for users with diverse abilities or preferences. For instance, someone with limited mobility can use voice commands or eye-tracking to navigate a system effectively.

Industry insight

The global multimodal AI market is projected to reach $10.89 billion by 2030, growing at a CAGR of 35.8%. Grand View Research

This rapid growth underscores the transformative potential of multi-modal interfaces in shaping the future of user experiences.

Key Technologies Powering Multi-Modal Interfaces

The seamless experience of multi-modal design is powered by advanced technologies working together:

AI and Large Language Models (LLMs): Advanced LLMs, such as GPT-4, enable context-aware and conversational interactions for both text and voice, enhancing natural language understanding.

Computer Vision: This technology analyzes gestures, facial expressions, and other visual inputs to make interactions more responsive and intuitive.

Natural Language Processing (NLP): NLP bridges the gap between human communication and machine understanding, powering voice and text-based interactions.

Sensor Fusion: Combining data from multiple sensors—such as touch, motion, and voice—provides a holistic understanding of user behavior and context.

These technologies collectively enable more natural, adaptive, and user-friendly interactions.

Real-World Applications

Healthcare: Radiologists use voice commands to manipulate scans, enabling them to focus on patient care while improving efficiency. For instance, Nuance’s Dragon Medical One allows hands-free documentation, streamlines workflows, and improves precision.

Retail: Imagine walking into a store and asking for a device to locate a product. The system highlights the correct shelf, saving time and effort. Technologies like Amazon’s Just Walk Out combine NLP, sensor fusion, and AI to create faster, more intuitive shopping experiences.

Accessibility: Microsoft’s Eye Control empowers individuals with mobility challenges by integrating voice commands, gestures, and eye tracking. This multimodal approach enables greater independence and inclusivity.

Privacy and Security in Multi-Modal Interfaces

Ensuring robust security measures is crucial for protecting user data in multi-modal interfaces. Implementing encryption, anonymization, and secure storage practices helps safeguard sensitive information from breaches. Compliance with data protection regulations like GDPR and CCPA is essential to uphold user trust and meet legal obligations.

Encryption: Encrypting data both at rest and in transit ensures that sensitive information remains confidential and protected from unauthorized access.

Anonymization: Data anonymization techniques, such as data masking, remove personally identifiable information, allowing data to be used for analysis without compromising privacy.

Secure Storage Practices: Implementing secure storage solutions ensures data integrity and availability while protecting against loss or tampering.

Compliance with GDPR and CCPA: Adhering to data protection regulations is vital for legal compliance and maintaining user trust.

By integrating these measures, organizations can uphold privacy standards and protect user data.

Design Considerations for Multi-Modal Success

Creating exceptional multi-modal interfaces requires thoughtful design principles that balance usability, privacy, and adaptability.

Transparent Opt-Out Options: Provide users with an easy way to revoke their consent, whether for specific data collection modalities (e.g., voice commands or gesture tracking) or the system as a whole.

Simple User Interfaces: Consider privacy dashboards, where users can easily view and manage how their data is being used.

Data Deletion Requests: Include a straightforward process for users to request the deletion of previously collected data. This complies with privacy regulations like GDPR and CCPA and reinforces user autonomy.

Dynamic Helpful Feedback: Notify users how their changes impact their experience (e.g., "Turning off voice input may limit functionality"). This keeps expectations clear while respecting their choices.

Context Awareness: Ensure the interface recognizes and adapts to the user’s environment, such as enabling hands-free voice commands while driving or prioritizing touch-based inputs in desk-bound scenarios. Context awareness ensures the system remains relevant and functional in changing conditions.

Dynamic Adaptation: Go beyond recognizing the context by dynamically adjusting the interface in real time. For example:

Seamless Transitions: Enable fluid shifts between modalities, such as moving from voice commands to touch inputs, without disrupting the interaction. This ensures users can complete tasks efficiently across different input methods.

Harmonized Modalities: Design input methods to work together rather than compete, creating a cohesive experience. For example, pairing voice input to initiate a task with touch controls for refinement ensures both modalities enhance, rather than overlap, each other.

Multi-Sensory Synergy: Leverage the strengths of each modality to enrich interactions. For instance, pairing visual feedback with voice commands reinforces clarity and ensures users can engage with the interface more effectively.

Conclusion

The rise of multi-modal interfaces presents an exciting opportunity for UX designers to push boundaries and redefine interactions. By focusing on adaptability, accessibility, and privacy, we can create experiences that are not just functional but truly transformative.

What’s your vision for the future of multi-modal design?

Imagine this: You’re rushing through your morning routine, hands full. You call out, “Hey Alex, what’s on my schedule today?” A soothing voice replies, outlining your meetings while your device displays your calendar. With a quick touch on the smartwatch, you reschedule an afternoon appointment. This seamless integration of voice commands, touch interactions, and visual feedback illustrates the power of multi-modal interfaces—the future of user experience design.

Core Features of Multi-Modal Interfaces

Adaptability: Users can switch between input methods based on context and preferences. For example, an operator might use voice commands to monitor machine performance on the production floor and later switch to a desktop interface to analyze detailed data and generate reports.

Intuitiveness: By mimicking natural communication styles, such as conversational voice commands, these systems make interactions feel effortless and familiar. This reduces the learning curve and fosters a more engaging experience.

Accessibility: Offering multiple input options ensures inclusivity for users with diverse abilities or preferences. For instance, someone with limited mobility can use voice commands or eye-tracking to navigate a system effectively.

Industry insight

The global multimodal AI market is projected to reach $10.89 billion by 2030, growing at a CAGR of 35.8%. Grand View Research

This rapid growth underscores the transformative potential of multi-modal interfaces in shaping the future of user experiences.

Key Technologies Powering Multi-Modal Interfaces

The seamless experience of multi-modal design is powered by advanced technologies working together:

AI and Large Language Models (LLMs): Advanced LLMs, such as GPT-4, enable context-aware and conversational interactions for both text and voice, enhancing natural language understanding.

Computer Vision: This technology analyzes gestures, facial expressions, and other visual inputs to make interactions more responsive and intuitive.

Natural Language Processing (NLP): NLP bridges the gap between human communication and machine understanding, powering voice and text-based interactions.

Sensor Fusion: Combining data from multiple sensors—such as touch, motion, and voice—provides a holistic understanding of user behavior and context.

These technologies collectively enable more natural, adaptive, and user-friendly interactions.

Real-World Applications

Healthcare: Radiologists use voice commands to manipulate scans, enabling them to focus on patient care while improving efficiency. For instance, Nuance’s Dragon Medical One allows hands-free documentation, streamlines workflows, and improves precision.

Retail: Imagine walking into a store and asking for a device to locate a product. The system highlights the correct shelf, saving time and effort. Technologies like Amazon’s Just Walk Out combine NLP, sensor fusion, and AI to create faster, more intuitive shopping experiences.

Accessibility: Microsoft’s Eye Control empowers individuals with mobility challenges by integrating voice commands, gestures, and eye tracking. This multimodal approach enables greater independence and inclusivity.

Privacy and Security in Multi-Modal Interfaces

Ensuring robust security measures is crucial for protecting user data in multi-modal interfaces. Implementing encryption, anonymization, and secure storage practices helps safeguard sensitive information from breaches. Compliance with data protection regulations like GDPR and CCPA is essential to uphold user trust and meet legal obligations.

Encryption: Encrypting data both at rest and in transit ensures that sensitive information remains confidential and protected from unauthorized access.

Anonymization: Data anonymization techniques, such as data masking, remove personally identifiable information, allowing data to be used for analysis without compromising privacy.

Secure Storage Practices: Implementing secure storage solutions ensures data integrity and availability while protecting against loss or tampering.

Compliance with GDPR and CCPA: Adhering to data protection regulations is vital for legal compliance and maintaining user trust.

By integrating these measures, organizations can uphold privacy standards and protect user data.

Design Considerations for Multi-Modal Success

Creating exceptional multi-modal interfaces requires thoughtful design principles that balance usability, privacy, and adaptability.

Transparent Opt-Out Options: Provide users with an easy way to revoke their consent, whether for specific data collection modalities (e.g., voice commands or gesture tracking) or the system as a whole.

Simple User Interfaces: Consider privacy dashboards, where users can easily view and manage how their data is being used.

Data Deletion Requests: Include a straightforward process for users to request the deletion of previously collected data. This complies with privacy regulations like GDPR and CCPA and reinforces user autonomy.

Dynamic Helpful Feedback: Notify users how their changes impact their experience (e.g., "Turning off voice input may limit functionality"). This keeps expectations clear while respecting their choices.

Context Awareness: Ensure the interface recognizes and adapts to the user’s environment, such as enabling hands-free voice commands while driving or prioritizing touch-based inputs in desk-bound scenarios. Context awareness ensures the system remains relevant and functional in changing conditions.

Dynamic Adaptation: Go beyond recognizing the context by dynamically adjusting the interface in real time. For example:

Seamless Transitions: Enable fluid shifts between modalities, such as moving from voice commands to touch inputs, without disrupting the interaction. This ensures users can complete tasks efficiently across different input methods.

Harmonized Modalities: Design input methods to work together rather than compete, creating a cohesive experience. For example, pairing voice input to initiate a task with touch controls for refinement ensures both modalities enhance, rather than overlap, each other.

Multi-Sensory Synergy: Leverage the strengths of each modality to enrich interactions. For instance, pairing visual feedback with voice commands reinforces clarity and ensures users can engage with the interface more effectively.

Conclusion

The rise of multi-modal interfaces presents an exciting opportunity for UX designers to push boundaries and redefine interactions. By focusing on adaptability, accessibility, and privacy, we can create experiences that are not just functional but truly transformative.

What’s your vision for the future of multi-modal design?

Other projects

Multi-Modal Interfaces - AI-Driven Evolution of User Experience Design Copy

November 30, 2024

AI-First UX Design Heading into 2025: Shaping Smarter User Experiences

November 23, 2024

AI-First Design: A Comprehensive Guide for UX Designers

February 27, 2025

Copyright 2025 by Lana Holston

Copyright 2025 by Lana Holston

Copyright 2025 by Lana Holston