AI-First Design: A Comprehensive Guide for UX Designers

Date:

February 27, 2025

Table of Contents

Introduction: Why Designers Should Care About AI-First Design

What is AI-First Design?

Understanding Retrieval-Augmented Generation (RAG)

Vector Databases: The Engine Behind Intelligent Search

Intents: The Backbone of Conversational AI

Connecting the Dots: How Intents Work with RAG

What is Vector-Based Similarity Search

Traditional Keyword Search vs. Vector-Based Similarity Search

Large Language Models (LLMs) and Their Role in UX

Introduction: Why Designers Should Care About AI-First Design

As the digital landscape evolves, the integration of artificial intelligence (AI) into design practices is becoming increasingly vital. AI-first design represents a paradigm shift from traditional methodologies, focusing on creating dynamic, user-centered experiences that leverage AI capabilities. For UX designers, understanding AI concepts — such as Retrieval-Augmented Generation (RAG), vector databases, intents, and Large Language Models (LLMs) — is essential for crafting innovative products that truly resonate with users.

Rather than being intimidated by these technical concepts, designers who embrace them gain a powerful new toolkit for solving user problems. This guide breaks down these technologies into accessible, actionable insights that you can immediately apply to your design process.

What is AI-First Design?

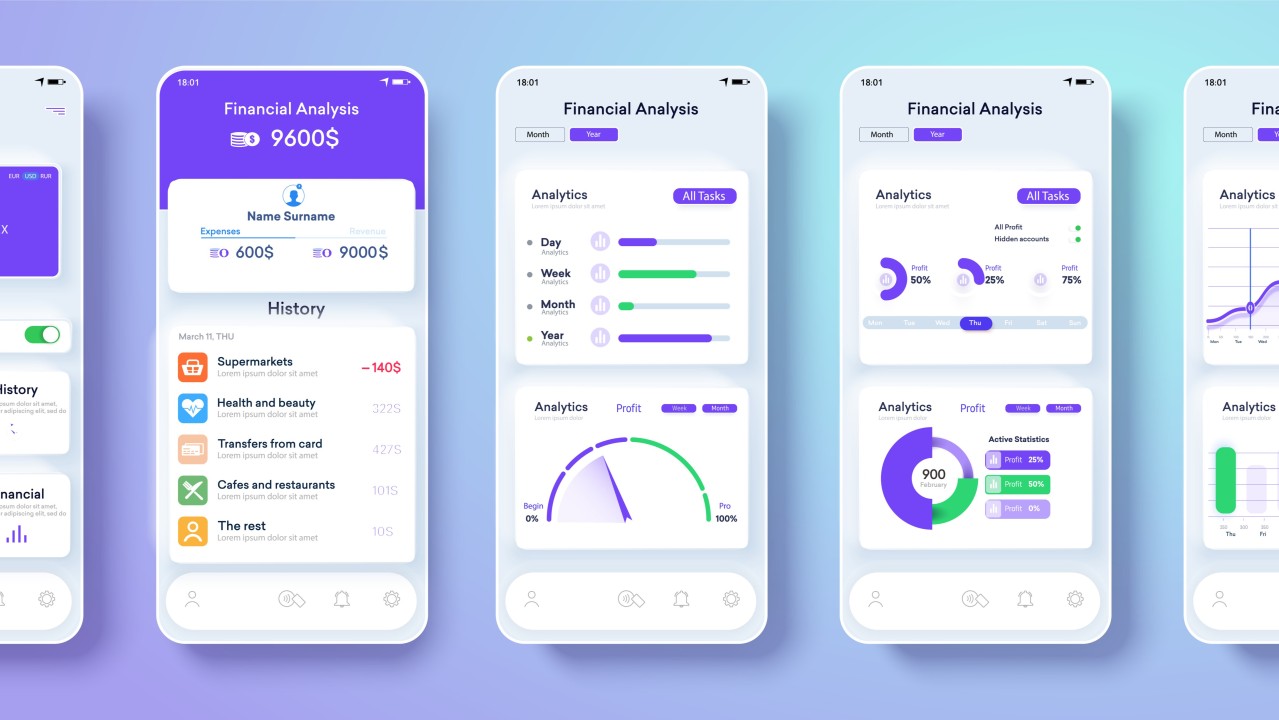

AI-first design emphasizes the transition from static user flows to dynamic, AI-driven interactions. This approach prioritizes:

Adaptive Interfaces: Interfaces that learn from user behavior.

Intent Recognition: Systems that understand and predict what users are trying to accomplish.

Personalization: Tailored experiences based on individual needs.

Contextual Awareness: Designs that consider the user’s situation and environment.

Unlike traditional design approaches, where user paths are fixed and predetermined, AI-first design creates experiences that evolve in real time based on user needs and behaviors. Rather than designing specific screens, you’re designing intelligent systems that adapt as conversations and interactions progress.

Understanding Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) bridges the gap between the general knowledge of Large Language Models (LLMs) and the need for real-time, specific data.

How RAG Works (In Simple Terms)

Imagine designing a customer service chatbot. With a traditional LLM, the bot can only answer based on its pre-trained knowledge. With RAG, your chatbot can:

Retrieve relevant information from your company’s knowledge base, product documentation, or other sources.

Augment the user’s question with this specific contextual data.

Generate a response that blends the LLM’s capabilities with your proprietary information.

Key Components of RAG:

Retrieval Component: Searches and extracts relevant context from external databases.

Augmentation Component: Merges user queries with the retrieved context.

Answer Generation Component: The LLM synthesizes the enriched input to produce accurate responses.

Design Implications: With RAG, you can design interfaces that provide:

More accurate and timely information.

Access to proprietary data.

Reduced hallucinations (or inaccuracies) by grounding responses in verified information.

Overall more trustworthy AI experiences.

Vector Databases: The Engine Behind Intelligent Search

At the heart of effective RAG systems are vector databases, which power similarity-based searches beyond simple keyword matching.

What Are Vector Databases?

Vector databases store information as mathematical representations (vectors), enabling the system to:

Capture Semantic Meaning: Convert text into numerical vectors that represent the underlying meaning rather than just specific words.

Similarity Search: Retrieve content based on conceptual similarity rather than just matching keywords exactly.

How It Works:

Embedding Generation: Text is transformed into vectors that encode semantic content.

Similarity Search: When a user queries, the system locates vectors that are similar in meaning.

Contextual Retrieval: The most relevant content is fetched to answer the query based on this similarity.

Traditional Keyword Search vs. Vector-Based Similarity Search

Traditional Keyword Search:

Mechanism: Matches exact words or phrases in a query with those stored in the database.

Limitations

Literal Matching: Only finds results that contain the exact terms, missing semantically related content.

Synonym Ignorance: Fails to understand context or synonyms — if a query uses a different word, relevant results may be overlooked.

Rigid Boundaries: Can lead to irrelevant results if a keyword appears in an unrelated context.

Vector-Based Similarity Search:

Mechanism: Converts text into vectors that capture the semantic meaning, then compares these vectors to determine similarity.

Advantages:

Conceptual Understanding: Recognizes and retrieves content that is conceptually similar, even if the exact words differ.

Flexibility: Adapts to different phrasings and synonyms, providing more relevant and context-aware results.

Enhanced User Experience: Delivers results that better align with the user’s intent, rather than relying solely on literal word matching.

UX Benefits:

Natural Language Processing: Users can ask questions in everyday language without worrying about specific keywords.

Improved Relevance: Results are more closely aligned with the intent behind the query, enhancing the overall user experience.

Reduced Friction: Users are not required to conform to rigid search terms, making the search process more intuitive.

Intents: The Backbone of Conversational AI

Intents represent the goals behind user inputs. Understanding intents helps map interactions to the correct actions.

Defining Intents

An intent is the underlying purpose of a user’s query. For example:

“What’s the weather like today?” → WeatherInquiry

“Book a table for two at 7pm” → Reservation

“How do I reset my password?” → PasswordHelp

Designing for Intent Recognition:

User-Centric Research: Understand how users express their needs.

Natural Language Variations: Consider different phrasings for the same intent.

Error Recovery: Design fallbacks for misunderstood queries.

Visual Aid Suggestion: A diagram showing examples of intents and possible user phrasings grouped by common goals.

Design Strategy: Group user needs by purpose rather than by specific screens or features, and create conversation flows that match these intentions.

Connecting the Dots: How Intents Work with RAG

This section explains how integrating intents with RAG creates a seamless AI-driven experience.

The Intent-RAG Workflow

Intent Detection: The system identifies what the user is trying to accomplish.

Contextual Retrieval: RAG fetches relevant information based on the detected intent.

Personalized Response: The LLM generates a tailored response by combining intent with the retrieved data.

A Practical Example:

Imagine a travel planning assistant:

User Query: “I want to visit somewhere warm in January with great food.”

Detected Intent: TravelDestinationSearch.

RAG Process: The system searches the vector database for destinations with warm weather and culinary reputation.

Response: “Barcelona might be a great option — January temperatures average around 55°F (13°C), and the city is known for its vibrant food scene. Would you like restaurant recommendations?”

Design Considerations:

Transparency: Clearly indicate where the information comes from.

User Control: Enable users to refine or correct the detected intent.

Progressive Disclosure: Present information incrementally, allowing users to dig deeper if desired.

Large Language Models (LLMs) and Their Role in UX

LLMs are the core “brain” powering many AI interactions. They process and generate human-like text, bridging user intents and contextual data.

Designing Interfaces with LLMs:

Clarity & Brevity: Keep interactions concise and focused.

Context Awareness: Ensure the LLM can remember previous interactions.

Human-AI Collaboration: Design systems where the AI assists rather than replaces human judgment.

Enhanced UX Capabilities:

Summarization: Condense complex data into digestible pieces.

Personalization: Tailor interactions based on individual user profiles.

Multi-turn Conversations: Maintain context over extended interactions.

Multimodal Understanding: Process and integrate text with visual data.

Practical Process:

Intent Mapping: Document and map out user goals.

Knowledge Organization: Plan how data will be stored and retrieved.

Conversation Flow Design: Create detailed interaction diagrams.

Prototype Testing: Use methods like the wizard-of-oz to test interactions.

Iteration: Refine based on real user feedback.

Challenges and Ethical Considerations

While AI-first design opens up exciting possibilities, it also brings challenges that require careful thought:

Data Privacy: Handle user data responsibly.

Trust Building: Design to build appropriate trust in AI responses.

Handling Ambiguity: Develop graceful error-recovery strategies.

Accessibility: Ensure designs are inclusive.

Bias Mitigation: Actively address and correct biases.

Conclusion: Applying AI Concepts in Your Design Work

As AI continues to reshape the digital landscape, embracing AI-first design principles will empower UX designers to create smarter, more adaptive experiences. By understanding RAG, vector databases, intents, and LLMs, you’ll be equipped to design interfaces that feel intuitive, personalized, and trustworthy.

Further reading:

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

https://arxiv.org/abs/2005.11401

What Is Retrieval-Augmented Generation, aka RAG?

https://blogs.nvidia.com/blog/what-is-retrieval-augmented-generation/

Nexocode on RAG

https://nexocode.com/blog/posts/retrieval-augmented-generation-rag-llms/

SuperAnnotate on RAG Explained

https://www.superannotate.com/blog/rag-explained

Towards a Human-like Open-Domain Chatbot

Table of Contents

Introduction: Why Designers Should Care About AI-First Design

What is AI-First Design?

Understanding Retrieval-Augmented Generation (RAG)

Vector Databases: The Engine Behind Intelligent Search

Intents: The Backbone of Conversational AI

Connecting the Dots: How Intents Work with RAG

What is Vector-Based Similarity Search

Traditional Keyword Search vs. Vector-Based Similarity Search

Large Language Models (LLMs) and Their Role in UX

Introduction: Why Designers Should Care About AI-First Design

As the digital landscape evolves, the integration of artificial intelligence (AI) into design practices is becoming increasingly vital. AI-first design represents a paradigm shift from traditional methodologies, focusing on creating dynamic, user-centered experiences that leverage AI capabilities. For UX designers, understanding AI concepts — such as Retrieval-Augmented Generation (RAG), vector databases, intents, and Large Language Models (LLMs) — is essential for crafting innovative products that truly resonate with users.

Rather than being intimidated by these technical concepts, designers who embrace them gain a powerful new toolkit for solving user problems. This guide breaks down these technologies into accessible, actionable insights that you can immediately apply to your design process.

What is AI-First Design?

AI-first design emphasizes the transition from static user flows to dynamic, AI-driven interactions. This approach prioritizes:

Adaptive Interfaces: Interfaces that learn from user behavior.

Intent Recognition: Systems that understand and predict what users are trying to accomplish.

Personalization: Tailored experiences based on individual needs.

Contextual Awareness: Designs that consider the user’s situation and environment.

Unlike traditional design approaches, where user paths are fixed and predetermined, AI-first design creates experiences that evolve in real time based on user needs and behaviors. Rather than designing specific screens, you’re designing intelligent systems that adapt as conversations and interactions progress.

Understanding Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) bridges the gap between the general knowledge of Large Language Models (LLMs) and the need for real-time, specific data.

How RAG Works (In Simple Terms)

Imagine designing a customer service chatbot. With a traditional LLM, the bot can only answer based on its pre-trained knowledge. With RAG, your chatbot can:

Retrieve relevant information from your company’s knowledge base, product documentation, or other sources.

Augment the user’s question with this specific contextual data.

Generate a response that blends the LLM’s capabilities with your proprietary information.

Key Components of RAG:

Retrieval Component: Searches and extracts relevant context from external databases.

Augmentation Component: Merges user queries with the retrieved context.

Answer Generation Component: The LLM synthesizes the enriched input to produce accurate responses.

Design Implications: With RAG, you can design interfaces that provide:

More accurate and timely information.

Access to proprietary data.

Reduced hallucinations (or inaccuracies) by grounding responses in verified information.

Overall more trustworthy AI experiences.

Vector Databases: The Engine Behind Intelligent Search

At the heart of effective RAG systems are vector databases, which power similarity-based searches beyond simple keyword matching.

What Are Vector Databases?

Vector databases store information as mathematical representations (vectors), enabling the system to:

Capture Semantic Meaning: Convert text into numerical vectors that represent the underlying meaning rather than just specific words.

Similarity Search: Retrieve content based on conceptual similarity rather than just matching keywords exactly.

How It Works:

Embedding Generation: Text is transformed into vectors that encode semantic content.

Similarity Search: When a user queries, the system locates vectors that are similar in meaning.

Contextual Retrieval: The most relevant content is fetched to answer the query based on this similarity.

Traditional Keyword Search vs. Vector-Based Similarity Search

Traditional Keyword Search:

Mechanism: Matches exact words or phrases in a query with those stored in the database.

Limitations

Literal Matching: Only finds results that contain the exact terms, missing semantically related content.

Synonym Ignorance: Fails to understand context or synonyms — if a query uses a different word, relevant results may be overlooked.

Rigid Boundaries: Can lead to irrelevant results if a keyword appears in an unrelated context.

Vector-Based Similarity Search:

Mechanism: Converts text into vectors that capture the semantic meaning, then compares these vectors to determine similarity.

Advantages:

Conceptual Understanding: Recognizes and retrieves content that is conceptually similar, even if the exact words differ.

Flexibility: Adapts to different phrasings and synonyms, providing more relevant and context-aware results.

Enhanced User Experience: Delivers results that better align with the user’s intent, rather than relying solely on literal word matching.

UX Benefits:

Natural Language Processing: Users can ask questions in everyday language without worrying about specific keywords.

Improved Relevance: Results are more closely aligned with the intent behind the query, enhancing the overall user experience.

Reduced Friction: Users are not required to conform to rigid search terms, making the search process more intuitive.

Intents: The Backbone of Conversational AI

Intents represent the goals behind user inputs. Understanding intents helps map interactions to the correct actions.

Defining Intents

An intent is the underlying purpose of a user’s query. For example:

“What’s the weather like today?” → WeatherInquiry

“Book a table for two at 7pm” → Reservation

“How do I reset my password?” → PasswordHelp

Designing for Intent Recognition:

User-Centric Research: Understand how users express their needs.

Natural Language Variations: Consider different phrasings for the same intent.

Error Recovery: Design fallbacks for misunderstood queries.

Visual Aid Suggestion: A diagram showing examples of intents and possible user phrasings grouped by common goals.

Design Strategy: Group user needs by purpose rather than by specific screens or features, and create conversation flows that match these intentions.

Connecting the Dots: How Intents Work with RAG

This section explains how integrating intents with RAG creates a seamless AI-driven experience.

The Intent-RAG Workflow

Intent Detection: The system identifies what the user is trying to accomplish.

Contextual Retrieval: RAG fetches relevant information based on the detected intent.

Personalized Response: The LLM generates a tailored response by combining intent with the retrieved data.

A Practical Example:

Imagine a travel planning assistant:

User Query: “I want to visit somewhere warm in January with great food.”

Detected Intent: TravelDestinationSearch.

RAG Process: The system searches the vector database for destinations with warm weather and culinary reputation.

Response: “Barcelona might be a great option — January temperatures average around 55°F (13°C), and the city is known for its vibrant food scene. Would you like restaurant recommendations?”

Design Considerations:

Transparency: Clearly indicate where the information comes from.

User Control: Enable users to refine or correct the detected intent.

Progressive Disclosure: Present information incrementally, allowing users to dig deeper if desired.

Large Language Models (LLMs) and Their Role in UX

LLMs are the core “brain” powering many AI interactions. They process and generate human-like text, bridging user intents and contextual data.

Designing Interfaces with LLMs:

Clarity & Brevity: Keep interactions concise and focused.

Context Awareness: Ensure the LLM can remember previous interactions.

Human-AI Collaboration: Design systems where the AI assists rather than replaces human judgment.

Enhanced UX Capabilities:

Summarization: Condense complex data into digestible pieces.

Personalization: Tailor interactions based on individual user profiles.

Multi-turn Conversations: Maintain context over extended interactions.

Multimodal Understanding: Process and integrate text with visual data.

Practical Process:

Intent Mapping: Document and map out user goals.

Knowledge Organization: Plan how data will be stored and retrieved.

Conversation Flow Design: Create detailed interaction diagrams.

Prototype Testing: Use methods like the wizard-of-oz to test interactions.

Iteration: Refine based on real user feedback.

Challenges and Ethical Considerations

While AI-first design opens up exciting possibilities, it also brings challenges that require careful thought:

Data Privacy: Handle user data responsibly.

Trust Building: Design to build appropriate trust in AI responses.

Handling Ambiguity: Develop graceful error-recovery strategies.

Accessibility: Ensure designs are inclusive.

Bias Mitigation: Actively address and correct biases.

Conclusion: Applying AI Concepts in Your Design Work

As AI continues to reshape the digital landscape, embracing AI-first design principles will empower UX designers to create smarter, more adaptive experiences. By understanding RAG, vector databases, intents, and LLMs, you’ll be equipped to design interfaces that feel intuitive, personalized, and trustworthy.

Further reading:

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

https://arxiv.org/abs/2005.11401

What Is Retrieval-Augmented Generation, aka RAG?

https://blogs.nvidia.com/blog/what-is-retrieval-augmented-generation/

Nexocode on RAG

https://nexocode.com/blog/posts/retrieval-augmented-generation-rag-llms/

SuperAnnotate on RAG Explained

https://www.superannotate.com/blog/rag-explained

Towards a Human-like Open-Domain Chatbot

Other projects

Multi-Modal Interfaces - AI-Driven Evolution of User Experience Design Copy

November 30, 2024

AI-First UX Design Heading into 2025: Shaping Smarter User Experiences

November 23, 2024

Unlocking Intuitive Design: Why UX Starts with Familiar Components

November 17, 2024

Copyright 2025 by Lana Holston

Copyright 2025 by Lana Holston

Copyright 2025 by Lana Holston